Are you ready to write neural network architectures and algorithms from scratch? I can sense some of you panicking already!

There are several reasons why you might want to start writing machine learning algorithms from scratch.

Here are some examples of why you write your own neural network architectures:

- Customisations: You need unique functionality

- Experience: You want to better understand how to produce machine learning systems

- Necessity: You want to use an architecture that does not exist in a pre-coded algorithm

- Fun: Sometimes it’s just fun to play around with some data and see what happens!

You can practice building out the steps of different algorithms using code and building up architectures of neural networks.

What is an artificial neural network

Let’s start with covering the overall structure of an artificial neural network.

An artificial neural network architecture is a system of calculations and feedback loops. The system is designed to allow a computer to mimic some of the processes used by the human brain to learn.

But what does that mean in practical terms for you, the machine learning practitioner?

Well, let me help out here.

In an artificial neural network, you are creating a network (well duh…) of different calculations that stimulate different ‘neurons’ within a hidden layer.

Ok ok, I’m getting a bit technical here. Let me help you out with a diagram.

What is the structure of an Artificial Neural Network?

So there are three main parts of an artificial neural network:

- Input layer

- Hidden Layer (where the neurons live)

- Output layer

The input layer forms the input vector. This vector represents the data for each instance of your data.

The output layer is the result, or output, of your model.

Then we have the exciting part, the hidden layer. This is where the neurons live.

How to think about the different layers when building your own neural network architectures

As we have discussed, there are three sections within and your network, the input layer, hidden layer and output layer.

The interesting part when we come to think about defining your own neural network architecture is the hidden layer.

The hidden layer doesn’t have to be just one layer, it can be as many layers as you like.

This is where you get to experiment.

Experimenting with neural networks and deep learning

Within the hidden layer, you can build up different functionality to manipulate your data to identify features within it. These features will help you make better predictions.

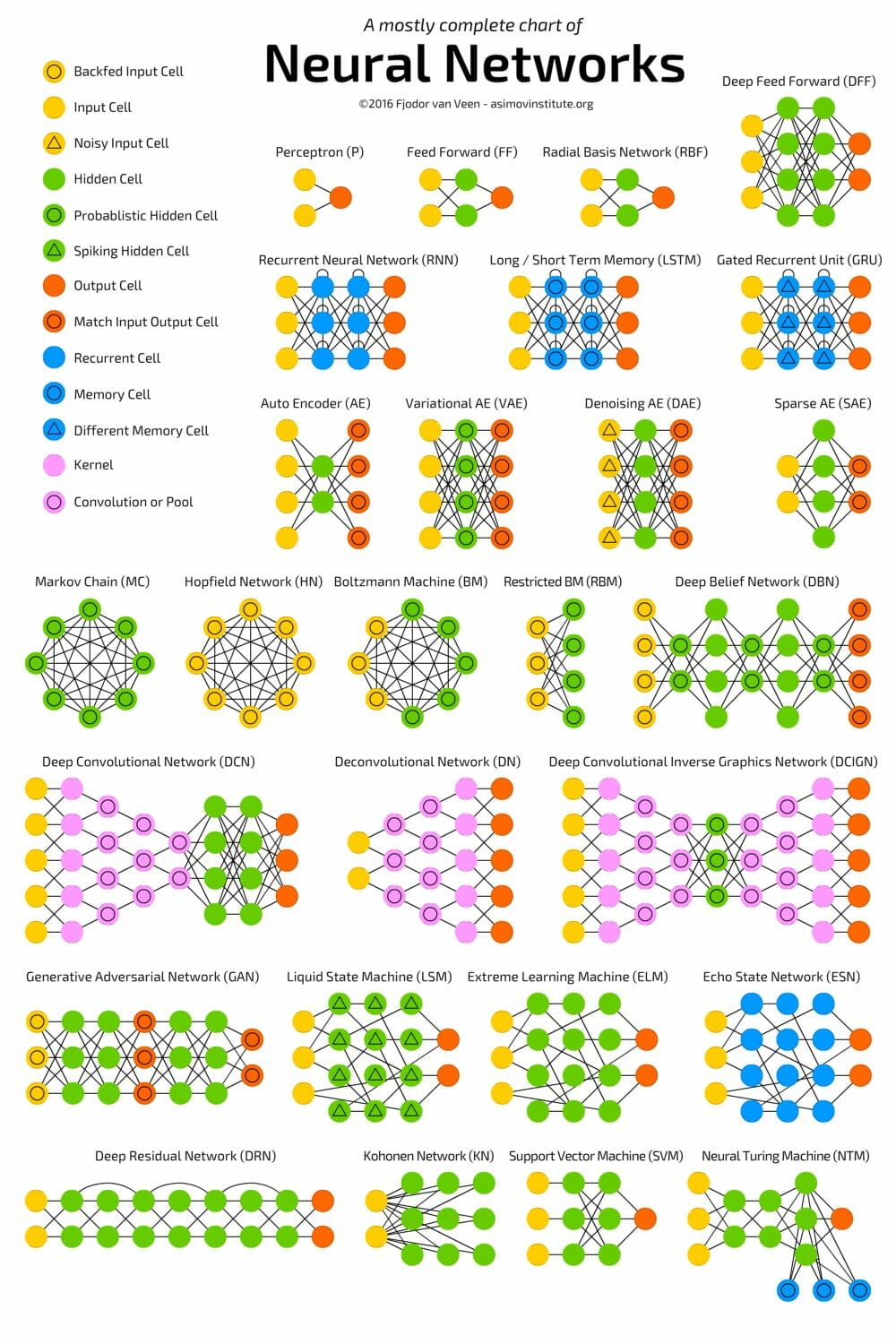

Within the literature there are a lot there are multiple standard neural network architecture is that have been shown to be effective within different tasks.

However, when you’re getting started building out your own your own network architecture is, I encourage you to explore different options.

Taking some of the standard formats as a base, you can use different techniques to build out your own structure.

For example with a convolutional neural network, you can take the standard code for convolution layers and activation functions as your base.

You can play around with that how you structure these within your hidden layers to better understand your image data.

You want to focus on developing an understanding of the features of your data.

Try different numbers of hidden layers, different activation functions and adding in skip layers.

When you experiment, you will gain experience and learn about what the different layers are used for. Also, you might find some interesting features in your images.

Ready to get started with Machine Learning Algorithms? Try the FREE Bootcamp

Getting strategic with your neural network

Actually get more experienced you want to start thinking about burning up now on that works in a more strategic way.

For each layer, you are adding into your network you want to think about what it is doing to your data. How does this relate to the way you want that data to be analysed?

A lot of the most impactful advances in machine learning technology have come about through people thinking about how human beings process data.

When you think about building out the deep learning structure of your network, think about what it makes sense to try.

If you’re looking to find new features within the dataset you want to try manipulating it both in terms of contracting and expanding the data back out into more features. You can test this process multiple times within your hidden layer.

This article on digitalvidya contains a lot of different structures for neural networks. The different networks described have been successful and you can use these structures as a base to test out an experiment on your data.

Things to consider when designing a neural network

There are two main points to consider when designing in your own network.

- The problem that you are trying to solve

- The previous work in the space

The reason you wanna look at the previous work in the space is that you can use this to make your own experiments more efficient.

As much as it’s good to try new things and test different applications, the prior art in the space will really help you to start off on the right foot.

Some examples of how to start building a neural network

To help you get started here are five points to consider when you think about building your own or network architecture:

- What problem space are you working on?

- Architectures that exist that you can use

- State of the art – anything published recently in this space?

- Transfer learning – where can you build on something?

- Existing networks for new applications – does it make sense to try out a neural network architecture in a new field?

How to write your own architecture for deep learning step by step

Now for the fun stuff!

Inspired by the fast.ai course I decided to do some experimentation with convolutional neural networks on the Pets database.

In these experiments my goal was to:

- Build on successful architectures with my own layers structure

- Understand what was happening at the different convolution network layers

I really like your writing style, great date, thank you for posting.

360DigiTMG PMP Course in Malaysia